In the high-stakes world of industrial automation and real-time monitoring, the ability to store and process data in chronological order is no longer a luxury—it is a fundamental requirement. As billions of sensors across the globe generate continuous streams of telemetry, traditional data storage methods are being replaced by more agile, specialized architectures. Many enterprises are now integrating db engines tsdb solutions to manage these massive ingestion rates while maintaining the low-latency query performance required for instant decision-making. By prioritizing time as the primary organizational axis, these systems turn raw operational noise into a clear, historical narrative.

The Structural Logic of Time-Indexed Storage

The primary advantage of a dedicated temporal engine lies in its "append-only" storage model. Unlike relational databases that must manage complex row locks and transactional overhead for frequent updates, a time series system is optimized for a constant, high-velocity influx of new information. By laying data points down on the disk in the order they occur, the system creates a naturally indexed sequence that allows for incredibly fast range scans.

This linear storage approach also facilitates superior hardware utilization. Because the database knows that data is arriving in a predictable sequence, it can optimize how it uses memory buffers and disk I/O. This results in a system that can handle millions of writes per second on relatively modest hardware, providing a cost-effective path for companies looking to scale their IoT or observability infrastructure.

Advanced Compression and Data Density

Managing the sheer volume of information generated by modern sensor networks requires a sophisticated approach to data density. Time series engines employ specialized compression algorithms that take advantage of the repetitive nature of temporal data. For example, if a temperature sensor reports the same value multiple times, the database can use "run-length encoding" to store that value once alongside a count, drastically reducing the physical space required.

Beyond simple repetition, these systems use "delta-encoding" to store only the mathematical difference between consecutive timestamps and values. This level of efficiency allows organizations to retain high-resolution data for much longer periods than would be possible with standard storage. Lowering the data footprint doesn't just save on cloud storage bills; it also accelerates query performance, as there is less physical data to move across the network when generating a report.

Evaluating the Global Market Landscape

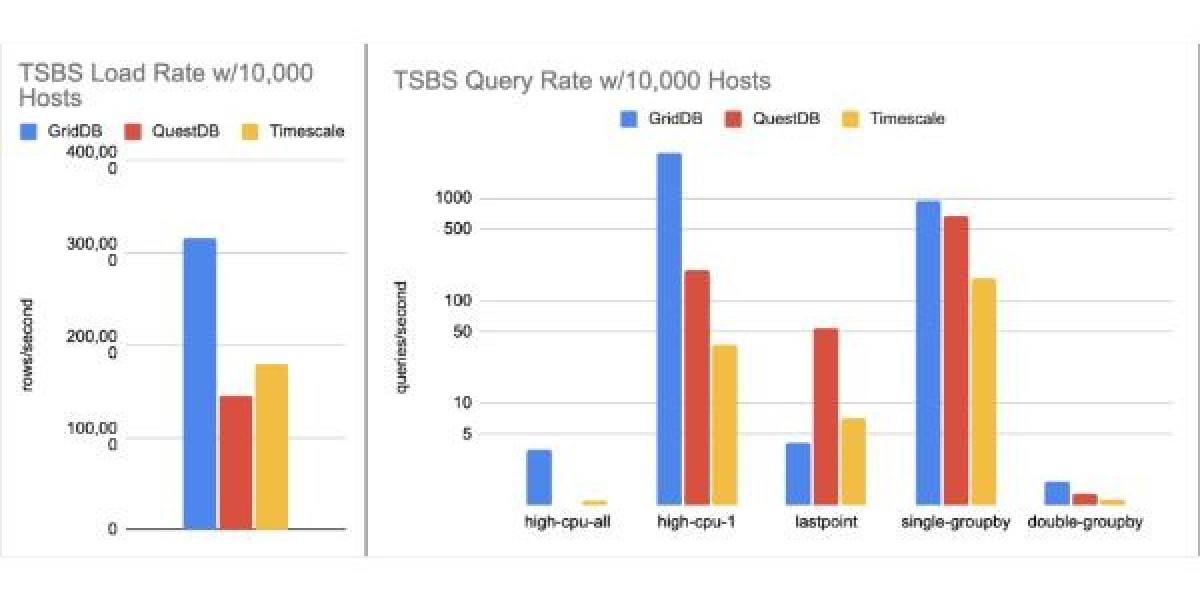

Selecting the right technology partner requires a deep understanding of how different platforms handle real-world stress. When reviewing a current time series database ranking, it becomes clear that the most resilient platforms are those designed with horizontal scalability in mind. As a project grows from a pilot phase to a global deployment, the database must be able to distribute its workload across multiple nodes without requiring a complete re-architecture of the application.

Modern rankings also emphasize the importance of "open standards" and interoperability. A leading database should not exist in a vacuum; it must connect effortlessly to existing data visualization tools, alerting frameworks, and machine learning pipelines. This ecosystem-centric approach ensures that the database acts as a flexible hub for all time-stamped information, rather than a closed silo that is difficult to integrate.

Industrial Transparency and Digital Twins

In the realm of smart manufacturing, time series data is the primary ingredient for creating "Digital Twins"—virtual replicas of physical assets. By recording every vibration, pressure change, and electrical pulse, a TSDB provides the high-fidelity history needed to simulate how a machine will behave under different conditions. This allows engineers to test operational changes in a virtual environment before applying them to expensive physical hardware.

This level of transparency is also transforming the renewable energy sector. Solar and wind farms rely on granular historical data to predict future output based on weather patterns. By analyzing years of performance data stored in a specialized engine, utility companies can optimize grid stability and ensure that they are making the most efficient use of every kilowatt produced.

Streamlining Analytics with Native Temporal Functions

The true value of a database is unlocked during the analytical phase. Specialized temporal engines provide a suite of native functions designed to handle common time-based calculations, such as moving averages, cumulative sums, and rate-of-change analysis. By executing these calculations directly "on-disk," the system avoids the need to pull massive amounts of raw data into an external application, which significantly reduces the "time-to-insight."

This internal processing power is vital for real-time alerting. Instead of waiting for a manual check, the system can be configured to trigger an automated response if a metric deviates from its historical norm. Whether it is identifying a security breach in a network or a cooling failure in a data center, the ability to process logic at the point of ingestion is a critical safeguard for modern infrastructure.

Technical Auditing and Performance Diagnostics

As data complexity increases, it is essential to influxdb tsdb analyze how a system manages "high cardinality"—a situation where the database tracks millions of unique combinations of tags and metadata. Managing this without exhausting system memory is a hallmark of an enterprise-grade solution. Advanced engines use inverted indexes and bitmap structures to ensure that searching for a specific device among millions remains a sub-second operation.

Regular diagnostics also help in identifying "hotspots" in data ingestion. By monitoring how different series contribute to the overall system load, administrators can fine-tune sharding strategies and retention policies to ensure consistent performance. This proactive approach to database management ensures that the infrastructure remains a robust asset even as the underlying business grows and evolves.

The Future of Distributed Data Intelligence

The next frontier for data management is the seamless integration of edge and cloud environments. As 5G connectivity becomes standard, more processing will occur on local gateways situated directly next to the physical sensors. This requires a database architecture that can function autonomously at the edge while periodically synchronizing its state with a centralized cloud repository for global aggregation.

This distributed model also enhances data security and sovereignty. By keeping granular, sensitive data at the local site and only transmitting anonymized summaries to the central hub, organizations can meet strict regulatory requirements while still benefiting from large-scale analytical trends. This balance of local control and global visibility is the defining feature of a modern, resilient data strategy.

Constructing a Sustainable Infrastructure

Building a sustainable data infrastructure requires a commitment to using the right tool for the job. By adopting a system specifically designed for the unique properties of temporal data, companies can avoid the performance bottlenecks and maintenance headaches associated with legacy database models. This strategic choice allows for faster innovation, more reliable monitoring, and a clearer path toward becoming a truly data-driven organization.

Ultimately, the goal of any time series initiative is to provide a window into the past that illuminates the path to the future. As the world becomes more instrumented and the volume of data continues to climb, the ability to capture and interpret the flow of time will remain a primary competitive advantage. Mastering this data stream is the key to unlocking new levels of operational excellence across every modern industry.